The Technofascist Technology Quiz

I’ve revised it since that first post and here are the current working definitions:

Short: Fascism amplified by technology.

Medium: Technofascism describes the collusion of large technology firms, right-wing billionaires and tech culture with authoritarian, extractive, violent, and anti-democratic political agendas.

Long: This convergence of technological and financial power with fascist state inclinations leads to products that directly threaten democracy, human life, and the planet: AI and advanced technologies placed in the service of violence, war and military occupation; the surveilling and policing of citizens and residents; the systematic traumatization of public servants; the racialized deportation and internment of migrants; the censorship and silencing of free speech; and the elevation of propaganda, misogyny, transphobia, hate speech and mob violence on media platforms.

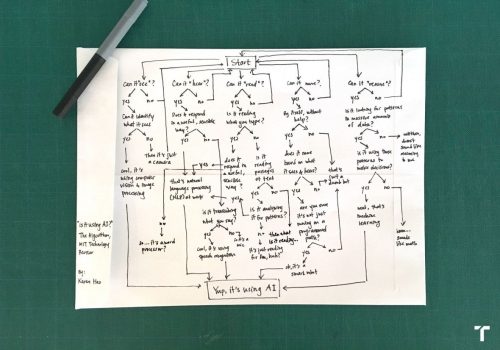

This quiz can be used as a teaching and reflection exercise to start to think together about the anti-democratic nature of the production process, governance, and impacts of specific technologies, systems and platforms. This includes AI but it is not limited to AI. Choose any tech product out there and do your research to be able to answer the following questions.

Do billionaires own, control and profit from this technology?

Is a military or law enforcement agency a main client for this technology?

Is the data infrastructure for this technology centralized, corporate and proprietary?

Do the firms that control this technology spend millions to resist regulation?

Is this technology marketed to bosses? Would workers reject it?

Does this technology surveil or cage or kill people?

In the hands of fascists, could this technology be used to surveil or cage or kill people?

Does this technology sort people into deserving and undeserving groups?

Does this technology ration public services (housing, health care, education, food benefits)?

Is this technology built on the theft and looting of human creative labor?

Does this technology automate labor that workers value and do not want to automate?

Does this technology incentivize the exploitation, degradation or harassment of trans people, Black people, women and/or other minoritized groups? Do its owners profit from those behaviors?

Does this technology incentivize the circulation of propaganda, disinformation, or spectacle? Do its owners profit from those behaviors?

Does this technology require more water and energy to develop and run than a small city?

Are any workers in the supply chain of this tech exploited and underpaid? Are any of them, anywhere in the supply chain, prevented from unionizing?