crontab guru

Regenerate initramfs for the New Kernel

To fix the issue, you need to regenerate the initramfs for the new kernel version. Run the following command in the terminal:

sudo update-initramfs -u -k <version>

Replace <version> with the actual kernel version string for the kernel that you were unable to boot into. For example, it might look something like 4.15.0-36-generic.

You can find the kernel version by running uname -r if needed.

Update GRUB

Once the initramfs has been successfully generated, update the GRUB bootloader by running:

sudo update-grub

This command ensures that GRUB recognizes the updated kernel and its corresponding initramfs.

To terminate the user session of the remotely logged-in user “linuxiac,” we will use the pkill command in Linux with the option “-KILL,” which means that the Linux process must be terminated immediately (not gracefully). Use the “-t” flag to specify the name of the TTY.

pkill -KILL -t pts/1

The second approach we will show you uses terminating a user session by process ID. To do this, we execute the w command again to get a list of logged-in users along with their associated TTY/PTS.

Then, once we’ve identified the TTY/PTS session, use the ps command with “-ft” parameters to find its PID:

ps -ft [TTY/PTS]

Code language: CSS (css)

Finally, use the kill command with “-9” (unconditionally terminate a process) switch passing the process ID. For example:

kill -9 4374

Don’t throw your old computer just yet. Use a lightweight Linux distro and revive that decades-old system.

The command to use depends on which distribution of Linux you’re using. For Debian based Linux distributions, the command is deluser, and for the rest of the Linux world, it is userdel.

sudo deluser –remove-home USERNAME

You can find a window’s class name by running xprop from the command line and clicking on clicking on target window (xprop displays the window’s X properties); then search the xprop output for the WM_CLASS(STRING) property, which will show the window class name you should use with picom.

Tips:

Use xprop | grep «WM_CLASS» instead of xprop to show only the window class name.

Speaking anecdotally, if the two values of WM_CLASS(STRING) are different, use the second one.

# Install new PHP 8.3 packages sudo apt install php8.3 php8.3-cli php8.3-{bz2,curl,mbstring,intl}# Install FPM OR Apache module sudo apt install php8.3-fpm # OR # sudo apt install libapache2-mod-php8.2# On Apache: Enable PHP 8.3 FPM sudo a2enconf php8.3-fpm # When upgrading from an older PHP version: sudo a2disconf php8.2-fpm## Remove old packages sudo apt purge php8.2*

nmap is a network mapping tool. It works by sending various network messages to the IP addresses in the range we’re going to provide it with it. It can deduce a lot about the device it is probing by judging and interpreting the type of responses it gets.

Let’s kick off a simple scan with nmap. We’re going to use the -sn (scan no port) option. This tells nmap to not probe the ports on the devices for now. It will do a lightweight, quick scan.

Even so, it can take a little time for nmap to run. Of course, the more devices you have on the network, the longer it will take. It does all of its probing and reconnaissance work first and then presents its findings once the first phase is complete. Don’t be surprised when nothing visible happens for a minute or so.

The IP address we’re going to use is the one we obtained using the ip command earlier, but the final number is set to zero. That is the first possible IPAddress on this network. The «/24» tells nmap to scan the entire range of this network. The parameter «192.168.4.0/24» translates as «start at IP address 192.168.4.0 and work right through all IP addresses up to and including 192.168.4.255».

Note we are using sudo.

sudo nmap -sn 192.168.4.0/24

…we’ll show you how to use the command “dmidecode” to get the information about your motherboard, as well as how to use the command “lshw” to get more information about your system

One workaround (for now) is to add GRUB_DISABLE_OS_PROBER=false to /etc/default/grub

Xfce is the oldest of the popular lightweight Linux desktop environments. It uses the GTK+ toolkit, just like the more popular GNOME interface that serves as the default for Ubuntu and Fedora.

MATE is a fork of GNOME 2 that formed when GNOME was transitioning to version 3.0. If you’ve ever used a version of GNOME from before 2011, then you’ve essentially used MATE. Although some things have changed, the fundamentals remain the same.

LXDE uses the now very dated GTK+ 2 library, so the lead developer decided to switch to Qt instead. He combined his efforts with the RazorQt team to create LXQt in order to replace LXDE.

Sway is a tiling Wayland compositor and a drop-in replacement for the i3 window manager for X11. It works with your existing i3 configuration and supports most of i3’s features, plus a few extras.

Sway allows you to arrange your application windows logically, rather than spatially. Windows are arranged into a grid by default which maximizes the efficiency of your screen and can be quickly manipulated using only the keyboard.

I followed people’s advice and used xrandr to make my screen 720×1080 on just the usable part on the monitor. Simple and clean gnome interface, and I didn’t need 2/3 of the screen for the terminal anyway!

Last summer in the first swings of the global pandemic, sitting at home finally able to tackle some of my electronics projects now that I wasn’t wasting three hours a day commuting to a cubicle farm, I found myself ordering a new smartphone. Not the latest Samsung or Apple offering with their boring, predictable UIs, though. This was the Linux-only PinePhone, which lacks the standard Android interface plastered over an otherwise deeply hidden Linux kernel.

As a bit of a digital privacy nut, the lack of Google software on this phone seemed intriguing as well, and although there were plenty of warnings that this was a phone still in its development stages it seemed like I might be able to overcome any obstacles and actually use the device for daily use. What followed, though, was a challenging year of poking, prodding, and tinkering before it got to the point where it can finally replace an average Android smartphone and its Google-based spyware with something that suits my privacy-centered requirements, even if I do admittedly have to sacrifice some functionality.

La lista TOP500 se publica dos veces al año y registra los supercomputadores más potentes del año. Solo ha habido un cambio en los diez primeros puestos, y es uno curioso y con un poco de sorna.

Se trata de la llegada la lista del supercomputador Voyager-EUS2, que ha desarrollado Microsoft Azure y que cuenta con una potencia de 30 petaflops por segundo. Todo estupendo, pero lo realmente curioso es que el supercomputador de Microsoft no funciona con Windows, sino con Linux.

There two main paths to look for entries to autostart:

/etc/xdg/autostart – called system-wide and most of the application will place files when they are installed.

[user’s home]/.config/autostart – user’s applications to start when the user logs in .

There is a security problem here, which is sometimes installing a package will place an autostart file there because the maintainer decided it is important but the package might be just a dependency and the next time the user logs in unwanted program might execute and open ports!

[Greeter]

#activate-numlock=false

#background=»

#background-color=’#000000′

#draw-grid=false

#draw-user-backgrounds=true

#enable-hidpi=auto

#font-name=’Ubuntu 11′

#group-filter=[]

#hidden-users=[]

#high-contrast=false

#icon-theme-name=’gnome’

#logo=»

#onscreen-keyboard=false

#other-monitors-logo=»

#play-ready-sound=»

#screen-reader=false

#show-a11y=true

#show-clock=true

#show-hostname=true

#show-keyboard=true

#show-power=true

#show-quit=true

#theme-name=’Adwaita’

#xft-antialias=true

#xft-dpi=96.0

#xft-hintstyle=hintslight

#xft-rgba=rgb

System-wide configuration of the Debian X session consists mainly of options inside the /etc/X11/Xsession.options file, and scripts inside the /etc/X11/Xsession.d directory. These scripts are all dotted in by a single /bin/sh shell, in the order determined by sorting their names. Administrators may edit the scripts, though caution is advised if you are not comfortable with shell programming.

This is a book about understanding the parts of your system, and customize them as you please.

You won’t find anything related to GUIs (Graphical User Interfaces) in here. That’s primarily because GUIs aren’t standardized or «equal» on all unix machines. However, terminals are and for this fair reason I’m only going to treat CLIs (Command Line Interface) and TUI (Terminal User Interface.)

Rice burner is a pejorative term originally applied to Japanese motorcycles and which later expanded to include Japanese cars or any East Asian-made vehicles. Variations include rice rocket, referring most often to Japanese superbikes, rice machine, rice grinder or simply ricer.

The term is often defined as offensive or racist stereotyping. In some cases, users of the term assert that it is not offensive or racist, or else treat the term as a humorous, mild insult rather than a racial slur.

«Rice» is a word that is commonly used to refer to making visual improvements and customizations on one’s desktop. It was inherited from the practice of customizing cheap Asian import cars to make them appear to be faster than they actually were – which was also known as «ricing». Here on /r/unixporn , the word is accepted by the majority of the community and is used sparingly to refer to a visually attractive desktop upgraded beyond the default.

onts are vector data that gets rasterized when displayed to the user. Computer displays are low-DPI devices for complex reasons, and such DPI (96) is not enough to display fonts without a myriad of trade-offs. Most notable are sub-pixel rendering, sub-pixel positioning, font hinting and anti-aliasing. You can read up more here and here if you want the full background.

Each operating system approaches font rendering differently. To get Windows-like rendering that I prefer, anti-aliasing, sub-pixel rendering, sub-pixel positioning and font hinting based on byte code embedded into fonts – basically, every step of the technological progress made in the last 30 years – need to be active.

If we’re not running a full blown desktop environment like GNOME, KDE or XFCE, the chances of getting good font rendering in a post Xorg installation after a default linux base (think of arch or voidlinux) install is zero. This guide serves as a list of todo items to get decent font rendering with these sort of installs.

Open the terminal and copy paste this command:

echo $XDG_CURRENT_DESKTOP

…simply type screenfetch in the terminal and it should show the desktop environment version along with other system information.

In the context of screens, DPI (Dots Per Inch) or PPI (Pixels Per Inch) refer to the number of device pixels per inch, also called “pixel density”. The higher the number, the smaller the size of the pixels, so graphics are perceived as more crisp and less pixelated.

This post is an explanation about my Openbox configurations and how to use them. Openbox is my favourite window manager, and it’s the first window manager I use. At first, I didn’t even know how to make Openbox worked. When I installed it and ran it, I just got a blank black screen and didn’t even know how to launch any application. I followed several guide but couldn’t understand it, I gave up. But after a while, I heard about Crunchbang. It came with preconfigured Openbox, and a complete set of software to make Openbox usable as a desktop. I really enjoyed Crunchbang and kept playing around with it. Playing with Crunchbang meaned learning. I learned a lot about configuration files and theming. Also about modular applications that could work right with Openbox. I also tried a lot other popular window managers, but still couldn’t leave Openbox.

evo x weight loss buy letrozole uk 5 exercises for triceps with dumbbells

netstat -lt

Le téléphone est maintenant capable de se réveiller de la veille profonde (deep sleep) en cas d’appel ou de texto. On peut ainsi paramétrer la mise en veille pour augmenter la durée de la batterie sans craindre de rater un appel important. Ça allonge grandement l’autonomie, grâce à ça et d’autres améliorations l’appareil tient en moyenne un jour et demi, voire deux jours selon l’utilisation ; hourra !

Attention la fonction réveil de gnome-clocks ne sais pas faire sortir le téléphone de veille. On ne peut donc pas encore se réveiller avec le PinePhone, du moins pas sans bidouiller un peu, par exemple avec un script ou en installant l’application Wake Mobile (je n’ai pas testé).

Calls est encore pas mal perfectible. Exemple, lors d’un appel entrant, il affiche uniquement le numéro même si celui-ci est connu dans les contacts, avoir le nom serait plus pratique. Il m’est arrivé aussi que la sonnerie continue de jouer après avoir décroché ; c’est rigolo… ou pas, selon la situation.

Prendre une photo, pour le moment ça cafouille sévère, le résultat laisse à désirer mais ça s’améliore à chaque mise à jour de Megapixels.

L’autonomie est hautement perfectible, il faut le charger tous les jours, voire deux fois par jour selon l’utilisation. J’espère que les développeurs parviendront à mieux optimiser la consommation d’énergie dans un futur pas trop lointain.

Calibre is an all-in-one ebook management software that allows you to read, manage, and convert digital books. It supports a multitude of ebook file formats for both reading and conversion purposes. It can sync your book collection and reading progress across multiple devices. Calibre is a completely free and open-source app, being in development for over a decade. It is considered to be one of the most feature-packed and comprehensive ebook management suites available for desktop PCs.

Foliate is a free and open-source ebook reader for Linux. It aims to provide a clean, modern, and distraction-free interface for reading books on desktop computers. Its feature set is on par with other popular ebook readers and includes all necessary options for changing the book styles and formatting, syncing the reading progress, user bookmarks, text search, dictionary lookup, and so on. Foliate also comes with basic text-to-speech support for ebooks, something that other desktop readers lack.

The OpenWrt Project is a Linux operating system targeting embedded devices. Instead of trying to create a single, static firmware, OpenWrt provides a fully writable filesystem with package management. This frees you from the application selection and configuration provided by the vendor and allows you to customize the device through the use of packages to suit any application. For developers, OpenWrt is the framework to build an application without having to build a complete firmware around it; for users this means the ability for full customization, to use the device in ways never envisioned.

Other comparisons out there are recommending Operating Systems that are long dead or no longer relevant. This is most likely because these «Top 10 Open Source Linux Firewall Software» lists are copied from year to year by non-technical users, without doing the actual comparison.

Some Operating Systems have been superseded or simply stopped being maintained and became irrelevant. You want to avoid such systems because of security reasons – these distros use outdated and have insecure Linux/BSD kernels which can potentially expose you to security exploits.

Deepin Scrot is a slightly advanced terminal-based screenshot tool. Similar to the others, you should already have it installed. If not, get it installed through the terminal by typing:

sudo apt-get install scrot

After having it installed, follow the instructions below to take a screenshot:

To take a screenshot of the entire screen:

scrot myimage.png

To take a screenshot of the selected aread:

scrot -s myimage.png

…ALSA is responsible for giving a voice to all modern Linux distributions. It’s actually part of the Linux kernel itself, providing audio functionality to the rest of the system via an application programming interface (API) for sound card device drivers.

Users typically interact with ALSA using alsamixer, a graphical mixer program that can be used to configure sound settings and adjust the volume of individual channels. Alsamixer runs in the terminal, and you can invoke it just by typing its name. One particularly useful keyboard command is activated by hitting the M key. This command toggles channel muting, and it’s a fairly common fix to many questions posted on Linux discussion boards.

…the user-facing layer of the Linux audio system in most modern distributions is called PulseAudio.

The job of PulseAudio is to pass sound data between your applications and your hardware, directing sounds coming from ALSA to various output destinations, such as your computer speakers or headphones. That’s why it’s commonly referred to as a sound server.

If you want to control PulseAudio directly, instead of interacting with it through a volume control widget or panel of some sorts, you can install PulseAudio Volume Control (called pavucontrol in most package repositories).

If you feel that you have no use for the features provided by PulseAudio, you can either use pure ALSA or replace it with a different sound server.

The upcoming version of Windows 10 will feature a real Linux kernel in it as part of Windows Subsystem for Linux (WSL).

The so-called ‘love for Linux’ seems more like ‘lust for Linux’ to me. The Linux community is behaving like a teen-aged girl madly in love with a brute. Who benefits from this Microsoft-Linux relationship? Clearly, Microsoft has more to gain here. The WSL has the capacity of shrinking (desktop) Linux to a mere desktop app in this partnership.

By bringing Linux kernel to Windows 10 desktop, programmers and software developers will be able to use Linux for setting up programming environments and use tools like Docker for deployment. They won’t have to leave the Windows ecosystem or use a virtual machine or log in to a remote Linux system through Putty or other SSH clients.

In the coming years, a significant population of future generation of programmers won’t even bother to try Linux desktop because they’ll get everything right in their systems that comes pre-installed with Windows.

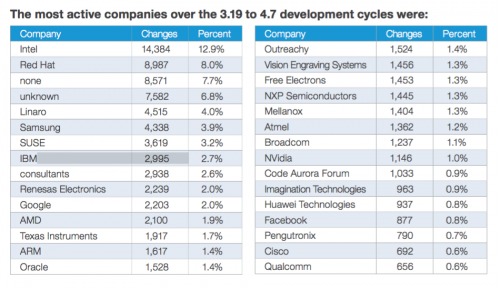

Just 7.7% of devs are unpaid.

As its importance has grown, development of Linux has steadily shifted from unpaid volunteers to professional developers. The 25th anniversary version of the Linux Kernel Development Report, released by the Linux Foundation today, notes that «the volume of contributions from unpaid developers has been in slow decline for many years. It was 14.6 percent in the 2012 version of this paper, 13.6 percent in 2013, and 11.8 percent in 2014; over the period covered by this report, it has fallen to 7.7 percent. There are many possible reasons for this decline, but, arguably, the most plausible of those is quite simple: Kernel developers are in short supply, so anybody who demonstrates an ability to get code into the mainline tends not to have trouble finding job offers.»

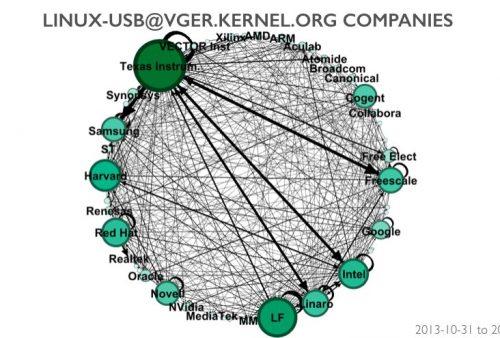

One of the interesting things about the Linux kernel is that the vast majority of people who contribute to it are employed by companies to do this work; however, most of the academic research on open source software assumes that participants are volunteers, contributing because of some personal need or altruistic motivation. Although this is true for some projects, this assumption just isn’t valid for projects like the Linux kernel.

Many kernel developers also collaborate with their competitors on a regular basis, where they interact with each other as individuals without focusing on the fact that their employers compete with each other.

Contrary to open-source folklore, it is mostly paid developers who are building the Linux kernel.

Kernel development follows a time-based release model with a new release occurring every two to three months. This is designed to help speed the development for all Linux distributions so that each one doesn’t need to make kernel-specific updates or changes. More than 6,100 individual developers from more 600 different companies have contributed to the kernel since 2005, according the report.

We are sick of not receiving updates shortly after buying new phones. Sick of the walled gardens deeply integrated into Android and iOS. That’s why we are developing a sustainable, privacy and security focused free software mobile OS that is modeled after traditional Linux distributions. With privilege separation in mind. Let’s keep our devices useful and safe until they physically break!

Mobian is an open-source project aimed at bringing Debian GNU/Linux to mobile devices.

We don’t need more Linux distributions. Stop making Linux distributions, make applications instead.

Los programas que usamos a diario están englobados en la capa de aplicación. El sistema operativo dispone de ciertas funcionalidades que sólo él es capaz de realizar, como por ejemplo crear ficheros, acceder a información del hardware del equipo, gestionar conexiones de red, etc.

Estas funcionalidades están protegidas por el sistema operativo y no se pueden ejecutar de forma directa a través de un programa común. Para acceder a estas funcionalidades, el programa debe pedirle al sistema operativo que sea el quien las ejecute, este se encarga de gestionar y devolver el resultado de la ejecución de estas funciones especiales. Estas peticiones se realizan mediante las denominadas “llamadas al sistema” (aka syscalls en jerga informática) y son cosas tan simples como crear un fichero nuevo o tan complejas como gestionar una conexión de red.

Holding down Alt and SysRq (which is the Print Screen key) while slowly typing REISUB will get you safely restarted. REISUO will do a shutdown rather than a restart.

Sounds like either an April Fools joke or some very strange magic akin to the old BIOS beeps we used to use to diagnose PC faults so bad that nothing would boot. Wikipedia comes to the rescue with an in-depth listing of all the SysRq keys.

R: Switch the keyboard from raw mode to XLATE mode

E: Send the SIGTERM signal to all processes except init

I: Send the SIGKILL signal to all processes except init

S: Sync all mounted filesystems

U: Remount all mounted filesystems in read-only mode

B: Immediately reboot the system, without unmounting partitions or syncing